|

Speechmax will get the best text to speech online mp3 for Indian voice. By clicking on the Download, you can get your audio. Click on the Play button to listen to the audio. Now, click on the Create Audio button to generate a unique human-like voice in Hindi and English.

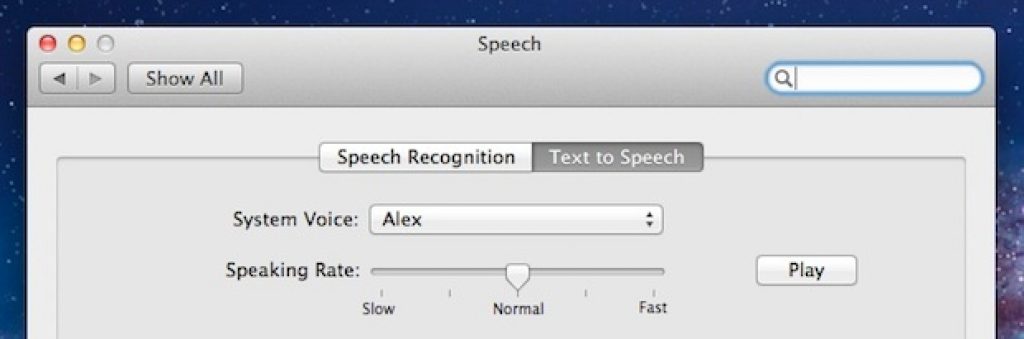

Better Voices Text To Speech How To Quantitatively MeasureMale & Female voices included. Evaluate Custom Speech accuracyTransform any text to speech. Audio + human-labeled transcription data is required to test accuracy, and 30 minutes to 5 hours of representative audio should be provided. In this article, you learn how to quantitatively measure and improve the accuracy of Microsoft's speech-to-text models or your own custom models. It includes a test editor with voice recorder to record your own words. By selecting a good text to speech voice (also known as 'voice fonts' or 'voice software'), you can improve the listening experience. Text to speech software reads text aloud, turning words into sound. Listen to natural-sounding voices.Text to Speech Converter: Selecting a Text to Speech Voice.Substitution (S): Words that were substituted between reference and hypothesisIf you want to replicate WER measurements locally, you can use sclite from SCTK. Deletion (D): Words that are undetected in the hypothesis transcript Insertion (I): Words that are incorrectly added in the hypothesis transcript Finally, that number is multiplied by 100% to calculate the WER.Text To Speech for Mac: Whether you want guides to read to you while you are busy or trying to grab a new foreign language or for specially-abled students.Incorrectly identified words fall into three categories: By selecting a good text to speech voice (also known as 'voice fonts' or 'voice software'), you can improve the listening experience. Text to speech software reads text aloud, turning words into sound. Listen to natural-sounding voices.Text to Speech Converter: Selecting a Text to Speech Voice.Substitution (S): Words that were substituted between reference and hypothesisIf you want to replicate WER measurements locally, you can use sclite from SCTK. Deletion (D): Words that are undetected in the hypothesis transcript Insertion (I): Words that are incorrectly added in the hypothesis transcript Finally, that number is multiplied by 100% to calculate the WER.Text To Speech for Mac: Whether you want guides to read to you while you are busy or trying to grab a new foreign language or for specially-abled students.Incorrectly identified words fall into three categories:

A WER of 30% or more signals poor quality and requires customization and training.How the errors are distributed is important. A WER of 20% is acceptable, however you may want to consider additional training. A WER of 5%-10% is considered to be good quality and is ready to use. Free data recover iso for mac os x 1058 downloadCreate a testIf you'd like to test the quality of Microsoft's speech-to-text baseline model or a custom model that you've trained, you can compare two models side by side to evaluate accuracy. Understanding issues at the file level will help you target improvements. Substitution errors are often encountered when an insufficient sample of domain-specific terms has been provided as either human-labeled transcriptions or related text.By analyzing individual files, you can determine what type of errors exist, and which errors are unique to a specific file. Insertion errors mean that the audio was recorded in a noisy environment and crosstalk may be present, causing recognition issues. To resolve this issue, you'll need to collect audio data closer to the source. Give the test a name, description, and select your audio + human-labeled transcription dataset. Select Evaluate accuracy. Navigate to Speech-to-text > Custom Speech > Testing. Typically, a custom model is compared with Microsoft's baseline model. This detail page lists all the utterances in your dataset, indicating the recognition results of the two models alongside the transcription from the submitted dataset. Click on the test name to view the testing detail page. Side-by-side comparisonOnce the test is complete, indicated by the status change to Succeeded, you'll find a WER number for both models included in your test. ScenarioLow, except when other people talk in the backgroundCan be high. The table shows which error types are most common in each scenario. The following table examines how content from these four scenarios rates in the word error rate (WER). The following table examines four common scenarios: ScenarioLow, 8 kHz, could be 2 humans on 1 audio channel, could be compressedVoice assistant (such as Cortana, or a drive-through window)Entity heavy (song titles, products, locations)Dictation (instant message, notes, search)Varied, including varied microphone use, added musicVaried, from meetings, recited speech, musical lyricsDifferent scenarios produce different quality outcomes. Improve Custom Speech accuracySpeech recognition scenarios vary by audio quality and language (vocabulary and speaking style). By listening to the audio and comparing recognition results in each column, which shows the human-labeled transcription and the results for two speech-to-text models, you can decide which model meets your needs and where additional training and improvements are required. For example, you may need updates to product names, song names, or new service locations.The following sections describe how each kind of additional training data can reduce errors. Your custom model needs additional training to stay aware of changes to your entities. Improve model recognitionYou can reduce recognition errors by adding training data in the Custom Speech portal.Plan to maintain your custom model by adding source materials periodically. The portal reports insertion, substitution, and deletion error rates that are combined in the WER quality rate. Use the Custom Speech portal to view the quality of a baseline model. Products and people's names can cause these errorsMedium, due to song titles, product names, or locationsCan be high due to music, noises, microphone qualityDetermining the components of the WER (number of insertion, deletion, and substitution errors) helps determine what kind of data to add to improve the model. Samples must cover the full scope of speech. Add audio with human-labeled transcriptsAudio with human-labeled transcripts offers the greatest accuracy improvements if the audio comes from the target use case. Domain-specific words can be uncommon or made-up words, but their pronunciation must be straightforward to be recognized.Avoid related text sentences that include noise such as unrecognizable characters or words. Related text sentences can primarily reduce substitution errors related to misrecognition of common words and domain-specific words by showing them in context. In most cases, you should start training by just using related text. Training with audio will bring the most benefits if the audio is also hard to understand for humans. Assure that your sample includes the full scope of speech you want to detect. When the quality of transcripts vary, you can duplicate exceptionally good sentences (like excellent transcriptions that include key phrases) to increase their weight. Unrelated sentences can harm your model. Avoid sentences that are not related to your problem domain. Avoid samples that include transcription errors, but do include a diversity of audio quality. Custom Speech can only capture word context to reduce substitution errors, not insertion, or deletion errors. For such languages, the base models offer already very good recognition results in most scenarios it's probably enough to train with related text. Add new words with pronunciationWords that are made-up or highly specialized may have unique pronunciations. The latter option is highly recommended if your Speech service subscription is not in a region with the dedicated hardware for training. This is especially true if your Speech service subscription is not in a region with the dedicated hardware for training.If you face the issue described in the paragraph above, you can quickly decrease the training time by reducing the amount of audio in the dataset or removing it completely and leaving only the text. If the previously used base model did not support training with audio data, and the training dataset contains audio, training time with the new base model will drastically increase, and may easily go from several hours to several days and more. To improve the speed of training, make sure to create your Speech service subscription in a region with the dedicated hardware for training.In cases when you change the base model used for training, and you have audio in the training dataset, always check whether the new selected base model supports training with audio data. It can take several days for a training operation to complete. See customization for pronunciation in the Speech-to-text table for details. This approach will not increase overall accuracy, but can increase recognition of these keywords.This technique is only available for some languages at this time. For example, to recognize Xbox, pronounce as X box.

0 Comments

Leave a Reply. |

Details

AuthorJennifer ArchivesCategories |

RSS Feed

RSS Feed